Episode 03: What is Artificial Intelligence?

Listen on Spotify, Apple Podcasts, YouTube, or wherever you get your podcasts!

In this episode of The Data Literacy Show, hosts Ben Jones (CEO of Data Literacy) and Alli Torban (Senior Data Literacy Advocate) share the basics of Artificial Intelligence (AI). This is a super-simple primer to help you join the AI conversation!

Topics we cover:

- What is AI? Basic definitions

- Examples of AI that we use every day

- Common myths or misconceptions

If you want to learn more about AI Literacy Fundamentals, take our course or read the book!

Episode show notes: http://dataliteracy.com/episode-03

Subscribe now to never miss an episode, and let’s make data accessible for everyone!

Want to learn more about AI Literacy?

We have a book and course that will help you learn the language of AI. It’ll empower you to lay a firm foundation in the core principles of AI, its history and evolution, concepts of machine learning and deep learning, everyday applications, and ethical considerations, enabling informed discourse on AI’s societal impact and future directions.

TRANSCRIPT:

Alli Torban: [00:00:00] Welcome to the Data Literacy Show, the podcast that helps organizations build, measure, and level up their data and AI literacy.

Ben Jones: Yes, welcome. I’m Ben Jones, co-founder and CEO of data literacy, where we are all about helping people learn the language of data and AI through tailored training and assessments.

Alli Torban: Yes. And I am Alli Torban. I’m the Senior Data Literacy advocate here at Data Literacy. And on today’s show we are celebrating National AI Literacy Day. Wow. Hey.

Ben Jones: Alright. What do you know? Yeah, so March 28th,

Alli Torban: 2025 is National AI Literacy Day, and we’re coming together to talk about what is ai, how it’s shaping our world.

And I thought it would be great to dedicate an episode giving some. Basic definitions of art, artificial intelligence, and how we actually might be using AI already without even knowing it, so we can collectively increase our AI literacy today. Here, right now.

Ben Jones: Right here now. I love it. Okay. Yes, this technology.

Ai, you know, it’s an umbrella term. There are so many types of algorithms and applications under this term, but it’s not going away. Um, and like you said, Ali, you know, most of us are already using it. We’ve been using it in a lot of ways for actually quite some time, and we may not be aware of that. Um, and so actually it’s, it’s on the rise too though.

So, according to LinkedIn’s skills on the RISE report, this is an inaugural inaugural report they put out this year, uh, just actually a week or so ago. Mm-hmm. AI literacy has been named the number one fastest growing skill. In the United States for 2025. Wow. And so, you know, it’s important to have a basic understanding of what it is.

And so we need to understand what some examples of AI and use are right now, but let’s also maybe tackle some of those common myths and misconceptions.

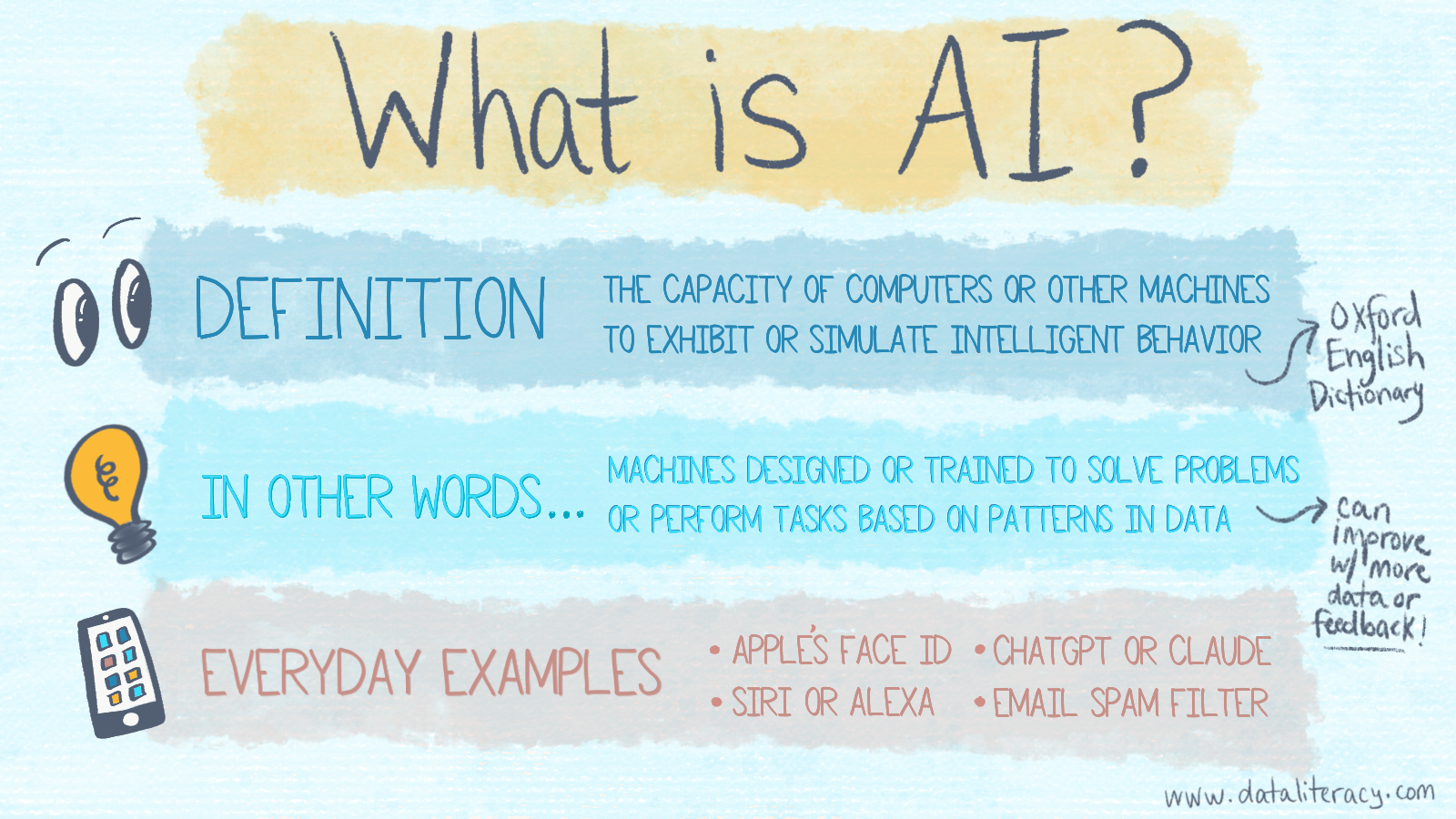

Alli Torban: Right. And it is kind of hard to just get one definition, one final. It’s be all, end all definition of what is artificial intelligence. So yeah. Uh, before that I helped.

Before I helped, uh, you build our AI literacy fundamentals course. I probably would’ve defined AI as intelligent machines, like those robots you see in the movies that can interact with you. They’re talking to you. Maybe they’re making you breakfast. So I’m, that’s not typically what we’re talking about when we were talking about AI right now.

So what, what do you think it actually is, Ben?

Ben Jones: Yeah, you’re right. There are so many examples in pop culture, especially as a child of the eighties and nineties. Mm-hmm. Where, you know, whether it’s data on Star Trek or Johnny Five, you know, this, these examples of robots, some of them more friendly than others.

Mm-hmm. But we’re gonna see robotics coming out. We’re gonna see. Those types of applications here real soon, they exist already. They’re not as common as a lot of other types and forms of ai. But if we just start for a moment to say, let’s just kind of try to pin it down. Can we actually define it? Well, it’s interesting to go back to the very, very beginning of the formal field of ai, let’s say in the 1950s.

So, um, there was a Professor John McCarthy at Stanford. At the ti Uh, at the time he was, I believe in Dartmouth, but he applied for and submitted an application for a, uh, a. A conference to take place in 1956. So in 1955, which is when he applied for the support and the funding for this Dartmouth workshop, which it’s become known as, he laid out a definition.

He said, it’s the science and engineering of making intelligent machines, especially intelligent computer programs. Mm-hmm. So I think going back to the person we call the father of ai. For an early definition is probably good. And, and I think that this definition is still really useful. It still really holds, of course, it begs the question, what, what does the word intelligent mean at all?

Um, so you know, we can look at other definitions as well. The dictionary itself, Oxford English dictionary is calling it the capacity of computers or other machines to exhibit or simulate intelligent behavior. Okay, so not intelligent machines anymore, but rather intelligent behavior. Mm-hmm. I think that’s an important distinction.

Yeah. Because whether they themselves have intelligence, the way human beings have, intelligence is I think probably open for debate, but most likely they do not. I mean, we’re not talking about them having consciousness or sentience. Um, I think that that’s a common myth. We like to think about that, but you know, really it’s about them taking on.

Tasks or doing jobs that would otherwise require some sort of intelligent human activity, right? Mm-hmm. And then they’re also now having that kind of agency, even maybe without having what we would define as human intelligence. So it gets into semantics a bit there, you know, and I think that that’s often the case where some of the confusion, um, can arise, but we can even look at other examples of definitions of ai.

I. Whether that’s from, you know, the US government or eu. Um, but I think you know what we need to really, I. Settle with is just that, you know, these are agents, they are having some agency, let’s say. Um, they’re having some ability to take action in the world and whether, whether we, you know, like that or not, whether it’s helpful or not is another matter.

But they’re taking actions and they’re doing things based on. Uh, patterns they’ve discovered in data. And when I say discovered, it’s not really the same as when a human discovers something. I think that’s important to differentiate. We tend to like those eighties and nineties TV shows and before we tend to, we call it an anthropomorphizing, uh, these, these machines where we start to imbue them with human-like qualities.

Mm-hmm. The bottom line is they’re doing things, they’re doing jobs, uh, for us in some cases to help us. Uh, and those jobs are being done based on these algorithms that we’ve created, right? That have been able to follow specific rules or discover certain patterns in the data, and then apply those patterns to.

Get a job done.

Alli Torban: Mm-hmm. Yeah. And it does, it is interesting how they use the word int intelligence or intelligent in these definitions, and then it makes you think, what does it actually mean to, for a machine to be intelligent? And it made me think about, in the course, in our AI literacy fundamentals course, you taught me the AI effect or the AI paradox where we think a task requires intelligence until we teach a computer to do it, and then we move the goalposts and we don’t.

Think that’s an intelligent task anymore.

Ben Jones: Yeah. It’s so, it’s, you know, constantly changing. It’s this moving goalpost, like you said. Mm-hmm. So for example, if you go back to the 1990s, um, you know, Gary Kasparov, world Chess champion loses two IBM’s. Deep blue, uh, AI system. And that was the first example really, of someone at the top of the chess world losing.

And at that time, up until then, it had widely been thought in AI circles that chess was a great example of a game that would be a, like a true test of ai because it requires so much intelligence to play it well. And that was the thinking. And then as soon as. Deep blue beats Casper off. Okay. In the immediate aftermath of that, people were saying that it was this huge major win for ai.

But then not long after that, critics started to push back saying, well, maybe deep blue is I. Not really smart, it’s just some overpowered calculator that’s pointed to some gigantic database of chess moves. So they start to, to de discount it. You know, they start to say, well maybe it isn’t actually artificial intelligence.

And that’s just one example because it seems like that is kind of always happening, right? Or certainly it has been happening. In the first half century or so of the history of the field of ai, this constant moving and shifting of what it means to be ai and it never quite lands on anything where it stays there.

It just keeps, keeps chasing itself really.

Alli Torban: Yeah. So that’s interesting. I can see how it’d be hard to define what is artificial intelligence if we do keep moving the goalposts as the, we get used to the technology.

Ben Jones: Exactly. You know, and I mean, it’s definitely the case that since that deep blue example in the late nineties, there’s been a big shift.

So there’s been a revolution in. The world of ai, uh, in the 21st century. You know, we call it the deep learning revolution, and this is where, um, models like a, a deep neural network that has a number of different layers, multiple layers of these artificial neurons connected. It’s sometimes billions of them together trained on data.

So that is a new. Uh, focus, uh, they’ve been around actually since the sixties and seventies in a very primitive form, but with the rise of, you know, compute and the scaling of that, as well as all of the massive amounts of data, we’ve been contributing to each one of us with every photo we upload and every mm-hmm.

Social media posts and, and such. Um, you know, now all of a sudden there’s this new opportunity to leverage those kinds of neural networks in new ways, and it has led to a huge revolution in our, in the last couple decades, let’s say. Um, and so I think that that’s something to take note of, that the complex of ai.

It is a little bit different now than what it was before, before 2000. Mm-hmm. Let’s say before like 2012 was around the year where we saw a pivot point there, maybe, let’s say. But, uh, but yeah, so it’s, it’s looking a little different now.

Alli Torban: Yeah. It’s moving so fast too, so in my mind, yeah. I’m thinking a good way of thinking.

What is artificial intelligence? Yeah. It’s basically machines designed and trained. To [00:10:00] solve problems or perform tasks based on patterns of data. And it gets better over time when the systems have more data to be trained on. And we give them feedback too. Sometimes.

Ben Jones: I think it doesn’t get a lot better than that Alley.

I mean, I think you nailed it. Of course. Maybe it could. I. Potentially get worse over time, depending on a few things. But in general, that’s the goal and that’s the trend, and that’s what we’ve seen. So I think you, you nailed it. I just, I can’t think of a way to improve on that definition.

Alli Torban: Mm-hmm. Yeah. From what,

Ben Jones: what you’ve got there.

Alli Torban: It’s a good, a good way, a good thing to keep in mind rather than just imagining. Robotic, robotic, uh, robots traveling around your house.

Ben Jones: Yeah, exactly. Exactly. It’s more useful.

Alli Torban: Alright, so one thing that most people are surprised to learn, including myself, is that we are currently using AI probably daily, multiple times a day.

Oh yeah. Because it’s already integrated into so many of our products that we use.

Ben Jones: Yep, absolutely. I mean, it’s really everywhere. You know, if you’re looking at the captions here on this video, there’s a [00:11:00] chance it was created by ai, um, audio or speech to text. So, uh, it really is everywhere. Most people, a lot of people anyway, when you talk about ai, they immediately think about chat.

GPT, the AI chatbot by open ai. It’s been out since November, 2022. And I can understand that because it’s gotten so much press and really a huge degree of adoption in a relatively short amount of time. But chat, GPT is just one very small. It, it’s a powerful one. So I think it’s important to, to notice it, and it led to, uh, a lot of change for sure.

Mm-hmm. But it’s just the tip of the iceberg. I mean, so another everyday example we can probably all relate to, let’s say you’re watching. Netflix or Hulu, all of a sudden it makes a recommendation that you watch a certain certain show. Well, how does it know that? How does it know to make that recommendation for you?

And you know, of course that recommendation is totally different than what your, your aunt, uh, gets recommended, you know, across the country. [00:12:00] So it’s using a form of AI called a recommendation system. Also maybe a recommender, sometimes we call it that. And it’s trying to predict which shows you’re likely to want to watch and enjoy so they can keep you in the platform, keep you watching their content.

And so they’re training these systems on data from all of their users, like maybe the viewing history and whether they rated it, and maybe lots of other demographic variables as well. Um, that are about the different viewers of their platform. So recommendation systems, same thing on Amazon. Hey, you’d like this book, maybe you’d like that book.

That’s also another example that we, we run into, you know, pretty much every week if not every day.

Alli Torban: Mm-hmm. Yeah. And speaking of moving the goalpost, if you had told me, oh yeah, that’s ai, I’d be like, well, I mean, why wouldn’t it figure out what I like? I mean, you’ve, we’ve had this for so long, uh. Getting recommended different shows, like I’ve showed you what I’ve liked to watch.

Right, of course. You would recommend to me, to me. At similar shows.

Ben Jones: Exactly. Yeah. And you imagine it being from their point of view. [00:13:00] Then what are they going to do? They have millions and millions of users. How can they write some rules-based program? Oh, if they like this program, this show automatically recommend that show.

Mm-hmm. That would be, I mean, there’s so many shows. They have this massive library, right? So, and, and a massive, uh, user base. So that’s what we call sparse data, where. Every user only watches a tiny percentage of the overall library. So actually from a data point of view, it’s very challenging to write some rules-based program that says, okay, you know, if they like this, then recommend them that.

So that’s why AI is useful because they can just train it on the actual, uh, viewing patterns themselves. To, you know, create these recommendations.

Alli Torban: Yeah. So yeah, the more I give it feedback saying, yes, I like that. No, I didn’t like that, then it’ll start to learn and give me better recommendations.

Ben Jones: Yeah. And it’s the, you know, the network effect too.

Not only the more you do that, but the more everyone does that, right. It will continue to give you better [00:14:00] recommendations. Even if you don’t do that, it’ll still improve because it’s based on more, more data, more connections between users and their content. So,

Alli Torban: right, and another AI system I interact with a lot is Siri, or if you have an Alexa.

Ben Jones: Yep.

Alli Torban: I use Siri on my phone a lot, asking her to set a timer for me or to tell me what the weather’s like, the in the morning. And this AI system, it’s using voice to text, speech recognition, as well as natural language processing, also called NNLP algorithms, uh, in order to interact with me in this really, uh, natural, natural way.

Ben Jones: And if you think about that, that’s a big challenge. What about accents? What about, you know, word choice? What about all these different ways? Maybe how loud you’re saying it, and if there’s background noise and all those sorts of things. It’s actually, you know, um, an example of a usage of AI that’s made massive strides in our lifetime.

And maybe in the early days, you remember if you used an earlier version of Siri or [00:15:00] Alexa, it would often misunderstand your question and it still does to some degree. Um, but it’s certainly improved quite a lot with some of the newer, uh, models and approaches. Mm-hmm. Um, but so another thing that’s thankfully improved a lot is email spam filtering.

So, mm-hmm. It could always get better. This is a little bit of a race, you know, uh, but you’re gonna log into Gmail or Yahoo or whatever. Now your, your email platform it’s going to use, it is using. A spam filter. So this is also using these, um, artificial neural networks as well as, you know, multiple layers of other kinds of technologies.

But these neural networks are learning what kinds of words and phrases are commonly showing up in known spam emails that they’re trained on. But you know, again, this is this cat versus mouse race because. The people making the spam, they’re constantly adjusting, changing their approaches, changing the words they’re using.

And so then these spam filters need to continually be updated, right? To, to kind of stay in front of it a little [00:16:00] bit. Um, same is true of. Credit card fraud. Um, you know, we’ve all gotten that text or phone call from our bank saying, did you make this transaction? And so it’s noticing something different about that particular transaction that’s, it’s being flagged and it’s AI again that is suggesting those flags.

It isn’t someone watching the transactions. It isn’t some. Rules-based approach that say if you do this, then do that. Mm-hmm. There are some rules-based, um, components to these like multiple layered systems, but a lot of it is AI kind of learning what patterns are likely to be associated with. A spam email or a fraudulent credit card transaction.

So we all benefit from that.

Alli Torban: Mm-hmm. Yeah, that’s, that’s for sure. And another AI system, similar to the banking example, is when we’re depositing checks on our phone. So our phones are using optical character recognition or OCR when we take a picture of a check to deposit it into our bank account. So that’s an easy [00:17:00] example that we’re probably all using all the time.

Ben Jones: Yeah. And it sounds so simple ’cause it is such an everyday thing. Mm-hmm. And again, this is the, that ai, I’m taking a picture, ofop Yeah, I know, right. Of course they should know the number. But maybe the, maybe the check is the number’s in a different place, or maybe it’s a blue pen or a black pen, or the way you write the letters is different than the way I do.

So now the systems are getting to the point where they can become pretty accurate, you know, at recognizing what we’ve written. And, uh, when we were kids, you and I, anyway, in the, in, in the eighties. That wasn’t the case. I mean, those systems would really struggle with a task like that, you know? And so again, it was considered dislike.

True test of, of ai and now it’s just seemed like it’s obvious that it should do that. Mm-hmm. Um, another example of course we’re all, um, you know, kind of probably using some kind of facial recognition. Um, like let’s say if you use an iPhone like I do, it has a face ID feature. Uh, what I love about it is it’s actually located on the phone itself, not in the cloud or what have you, which is great ’cause [00:18:00] it’s all about, you know, privacy and security.

But what is it doing? It’s using the camera, the front facing camera on your phone, and placing tiny. Invisible infrared dots on your face, getting a 3D image of your face, and then using that technology right there on the phone itself to try to identify if, if it’s the correct face or not. Um, and so, you know, again, it’s a very useful kind of application.

Most of us have gotten used to it and just assume that it’s a normal thing that a, that a, a product like a phone could do. But it wasn’t long ago that that was breakthrough. Mm-hmm. So, you know, we, we are of course seeing. That’s just everyday usage. We could also talk about the way companies are leveraging AI in really breakthrough ways right now, ever since again, you know, this deep learning revolution.

And then of course the, the, uh, large language models like chat, GPT that came out a few years ago and now we’re seeing companies, um, drive breakthroughs with ai. Drug [00:19:00] discovery, early discovery of diseases, even autonomous vehicles and what they’re doing on the road now, you know, continuing to increase in capabilities and I think that that’s something that we’re just gonna continue to see here in the next few years.

Alli Torban: Yeah, it’s really amazing how far we have come and the, just thinking about the potential of all these systems on our daily lives in the future, it’s, it’s, it’s gonna be really interesting. And I’m very excited to see.

Ben Jones: Yeah, because, you know, I agree and it’s in some ways exciting and it’s other ways, it’s a little scary.

Mm-hmm. Um, you know, because maybe the guardrails aren’t in place yet and um, maybe they’re not quite accurate enough or maybe there are lots of other problems we’ll, we’ll talk about in a moment. But I think it’s exciting. I think it’s frightening. I think the game then is to stay involved and educated and as AI literate as we possibly can be so we can be part of that conversation.

I.

Alli Torban: Yeah, I think that’s a good transition. Let’s, let’s talk a [00:20:00] little bit about some AI myths and misconceptions. So AI’s really seeped into our world already. We’ve, we’ve talked about, and it’s got com, there’s some really interesting places that it’s going. But let’s, let’s talk about, um, a myth or a misconception someone might have.

Ben Jones: Yeah. Yeah, I think it’s common. Okay. One of the most common ones, maybe it’s actually been talked about a lot, so people should hopefully not have this myth, but I still think they do. And that is that they’re somehow objective, you know, or, or unbiased because they’re computers and because they’re based on data, so therefore they must be reliable and objective.

But that’s not the case at all. You know? Um, of course the data itself is collected from a world with all kinds of. Biases and errors and inaccuracies and you know, that can be perpetuated by the AI system. That can even be amplified by it. I just saw a post today about how one of the chat bots [00:21:00] continued to reference a cancer, uh, tumor detection paper that had been retracted.

So the paper itself had been retracted, but. The AI still thought and still referenced, right?

Alli Torban: Yeah. It was trained on it already. Exactly. Mm-hmm. So,

Ben Jones: exactly. So then it didn’t know, it wasn’t able to adjust quickly enough to remove it from, its from its weights really. That’s the problem, is that it’s in these weights of these, these parameters that are, it’s tough to, um.

These, these, these neurons, these artificial neurons, you can’t just go and say, oh, remove it, and then it’s done. It’s like, it’s been trained on it. It’s part of its training dataset. So again, you know, it’s only one small example of all the ways that bias and unfairness can be propagated. Um, with ai it’s, it’s a big concern.

Uh, and of course, you know. Another concern is that it’s been trained on copyrighted content. Um, [00:22:00] this article just came out in the Atlantic recently, um, allowing people to search. A corpus called Lib Gen, which is a massive amount of pirated books that were pulled together and compiled Meta used that to train its llama family of LLM models.

And you know, even like the AI literacy fundamentals book that’s in the course is part of this massive corpus, along with, you know, millions and millions of others. I mean, it’s just one of, it’s just a drop in the ocean there. But you know, those. Books are copyrighted. Most of them. Many of them. And you know, there was no attribution, there was no credit, there was no permission.

There was certainly no compensation. So that’s a big question right now, you know, to what degree? Is this content that’s copyrighted that’s also been used to train the models? To what degree is that an example of fair use versus unfair use? It’s being fought out in the courts right now, and of course many people have strong opinions about that one way or another, but that’s something to call in attention [00:23:00] to, I think.

And it’s something we’re gonna see some more discussion about that here coming down the road.

Alli Torban: Yeah. And that’s your examples about books, but also if you think about art. Yeah, exactly. Yeah. Mm-hmm. People’s artwork, their unique styles, their skill has trained some of these models and then I can say, make me an image of X, Y, and Z and this style of this person.

And you know, if it’s Picasso, you know, who really cares because they’re, they’re, he’s long gone. But someone who, me, and it’s in the public domain. Yeah. Yeah. And maybe his arts in the

Ben Jones: public domain. Mm-hmm. Yeah.

Alli Torban: People making money on their work right now, and I can just emulate them with no problem, with no skill in their personal style.

That’s, that’s another aspect where it, it makes people really mad. It makes me mad. I’m, I’m concerned. Yeah. And

Ben Jones: ied it’s. I think it’s a legitimate beef that people have because Yeah. Especially because in a lot of cases, those uses are being used to compete against the very people. Mm-hmm. Whose content was tr was used to train.

Mm-hmm. That tool. So it seems to be on some conceptual level, or at least on an, on an emotional level, let’s say, uh, an example of what intellectual property was designed to protect. The original creator from that exact ca scenario, you know? Right. But, uh, again, we’ll see where it all goes. I mean, what’s different is that the capabilities of these, um, generative AI applications like chat, GPT, like Mid Journey for art, a lot of the video production, um, kinds of models now, um, that are being integrated into these, you know, um.

Types of systems with multiple capabilities, like say, Google’s, Gemini. Um, you know, those are, I think, questions that are, that are ones we are going to need to solve pretty quickly here. Mm-hmm. Because, um, those capabilities are amazing. Um, they’re not always great. Sometimes I don’t love them. Sometimes I, they can be turnoffs for me, I see a image and I can tell it’s generated by AI and I don’t love it.

But in any case, yeah, I think that the capabilities are something we’ve never seen before. But again, is it fair, it wasn’t fair that those, those copyrighted content has been used in that way to train it?

Alli Torban: Yeah. I think

Ben Jones: that’s a question we’re gonna have to answer.

Alli Torban: Yeah. A really important question for a lot of people.

Yeah. And another important myth is that AI can be trusted to make important decisions autonomously. Mm-hmm. In reality, AI systems, they’re really just statistical predictions, not reasoned judgments. So they really do require some kind of human oversight, especially, especially for those big decisions affecting people’s lives And.

These systems can hallucinate and give you incorrect information. And not everybody’s taking such a critical eye to the, to the outputs that AI systems are giving them.

Ben Jones: Yeah. We need to question everything it gives us, and you know, that’s something we need to talk about, that it’s something that is super important that [00:26:00] people are critically evaluating the outputs of AI systems.

And so the last myth we will share. Is that you need some kind of super advanced technical skills to work with AI, when in reality you do need some technical expertise to develop ai. That’s for sure, and there’s some very brilliant minds and work on that. But working effectively with AI as a, as a, say, a user.

Of an AI system that requires AI literacy rather than, you know, a PhD or programming skills or something like that. So it’s useful to understand these general capabilities. Really important to understand the limitations. And also we have to get to a place where we can, um, decide for ourselves what is an appropriate use case versus what is inappropriate.

Alli Torban: Right, right. We have just scratched the surface of what is AI in this episode. So let’s do a quick recap. Okay. We talked a little bit about some basic definitions of ai, um, and that [00:27:00] we have a tendency to move the goalpost when we’re talking about what is intelligence, and we use AI daily and things like Netflix, Siri, Apple’s face id, we are going to very amazing places with.

Deep learning revolution. And there’s also a lot of myths and misconceptions out there. Like AI is unbiased. We, it can make decisions autonomously or you need a lot of technical skills to work with what ai, those are all myths. And I hope that this small bit of, uh, AI literacy was a good primer. For you, for our listener to join the conversation about ai, especially on National AI Literacy Day.

That’s

Ben Jones: right. That’s right. So appropriate. Right. So, and of course, if you want to learn more, we have a course, we have a series of courses actually, that you or your team can take. There’s AI literacy fundamentals and the book that goes along with that. The next course is harnessing generative ai. The third course is a really important one called Advancing Responsible ai.

And so, um, you know, these are ways you can really dive in and we’re willing to work with you all, uh, if your organization, let’s say, to, uh, customize these courses to really fit and be tailored to your own, your own use cases. So that’s what we’re all about.

Alli Torban: Yeah, and Ben really explains these concepts in a very easy to understand way.

You don’t have to be a technical genius to understand these. They’re broken down really nicely. I created a bunch of diagrams to help support your learning, so you can read about it. You can see in diagram form, and you can go to data literacy.com and you can go to the training tab. You can see all these courses and more.

So thanks for tuning in to the Data Literacy Show. If you found this episode helpful, share it with someone who’s also working on improving data and AI literacy in their organization.

Ben Jones: That’s right. And hey, don’t forget to subscribe so you don’t miss the next episode, or anyone after that. And everything we mentioned today, you can find right there in the show [email protected].

Alli Torban: All right, see you next time. Bye. See you next time

Ben Jones: everyone. Bye-bye.