Trying out Code Interpreter for ChatGPT

Today I had the chance to experiment with an early alpha release of the Code Interpreter plugin for ChatGPT by OpenAI. Code Interpreter is “an experimental model that can use Python, and that handles uploads and downloads. You can find information about this plugin here, and you can add your own name to the waitlist to get access to this plugin here.

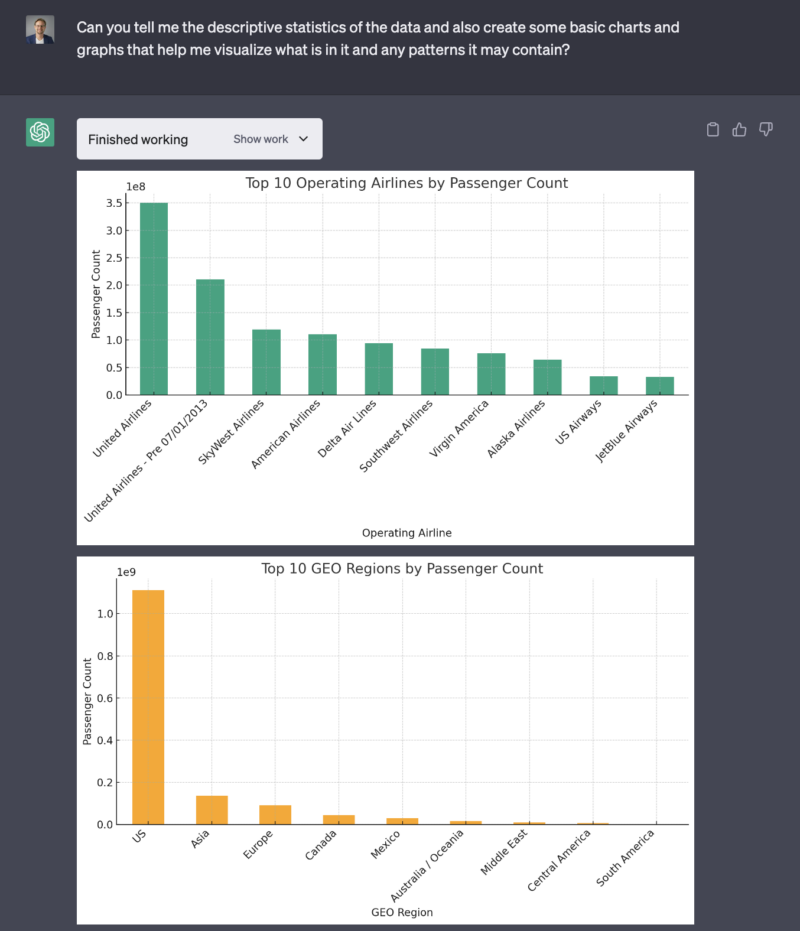

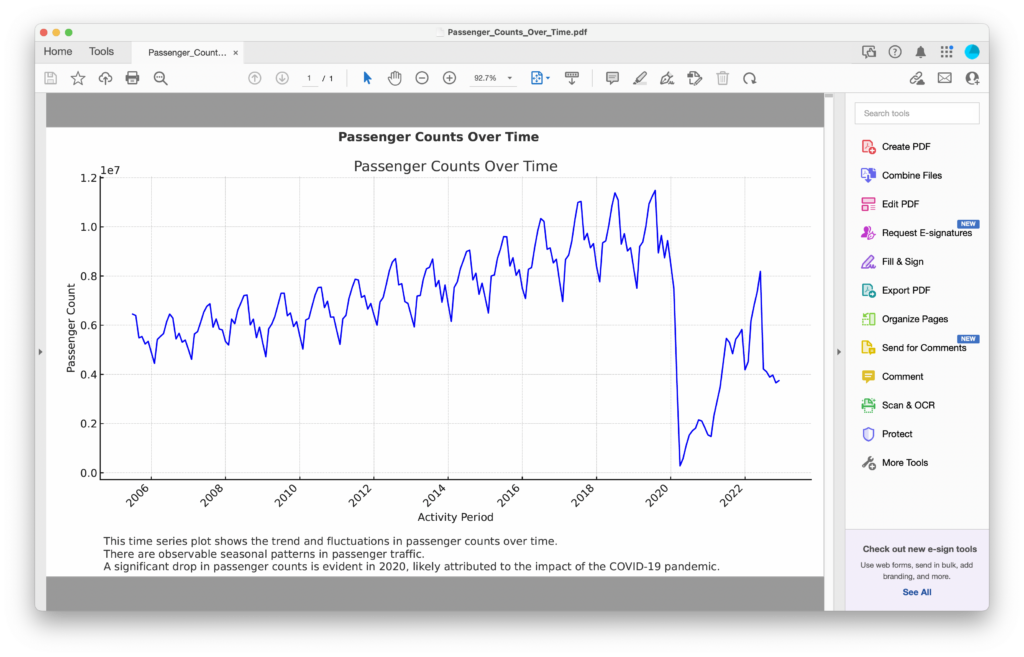

To take this model on a test flight, I used the Air Traffic Passenger Statistics CSV file on data.gov that shows monthly passenger counts for airlines going into and out of San Francisco International Airport from July 2005 through the end of last year.

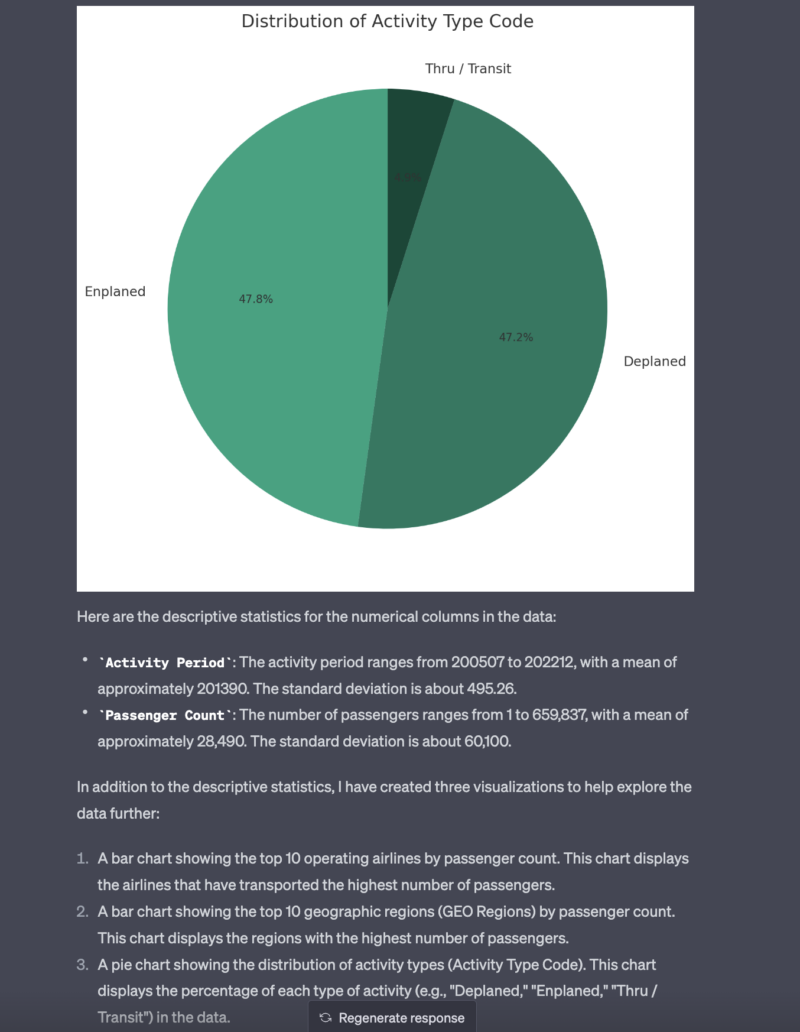

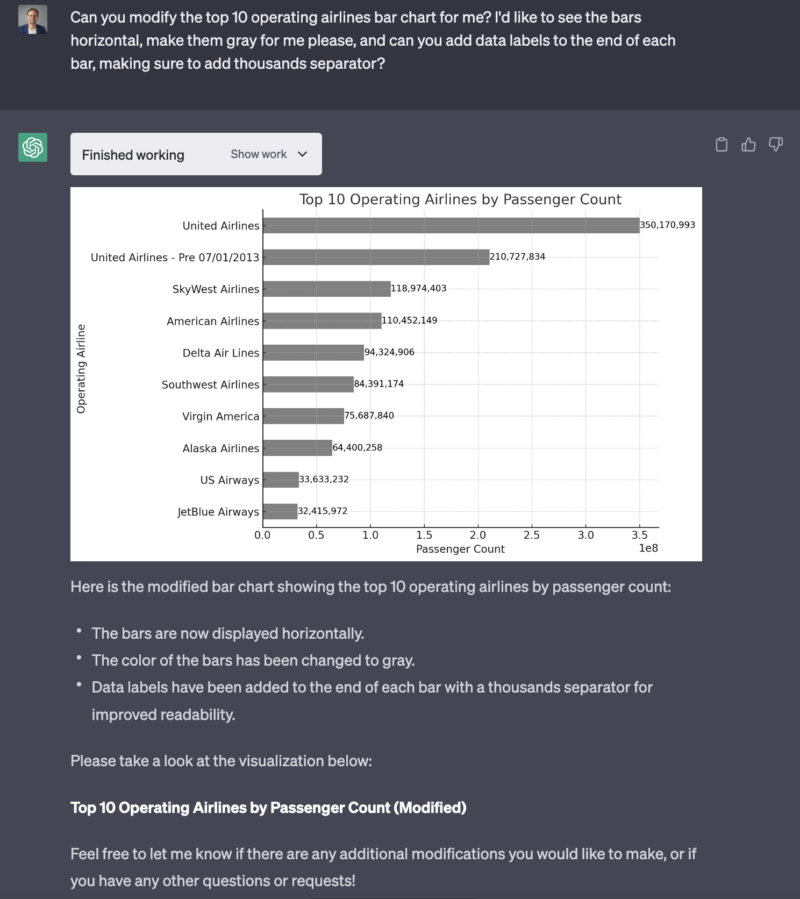

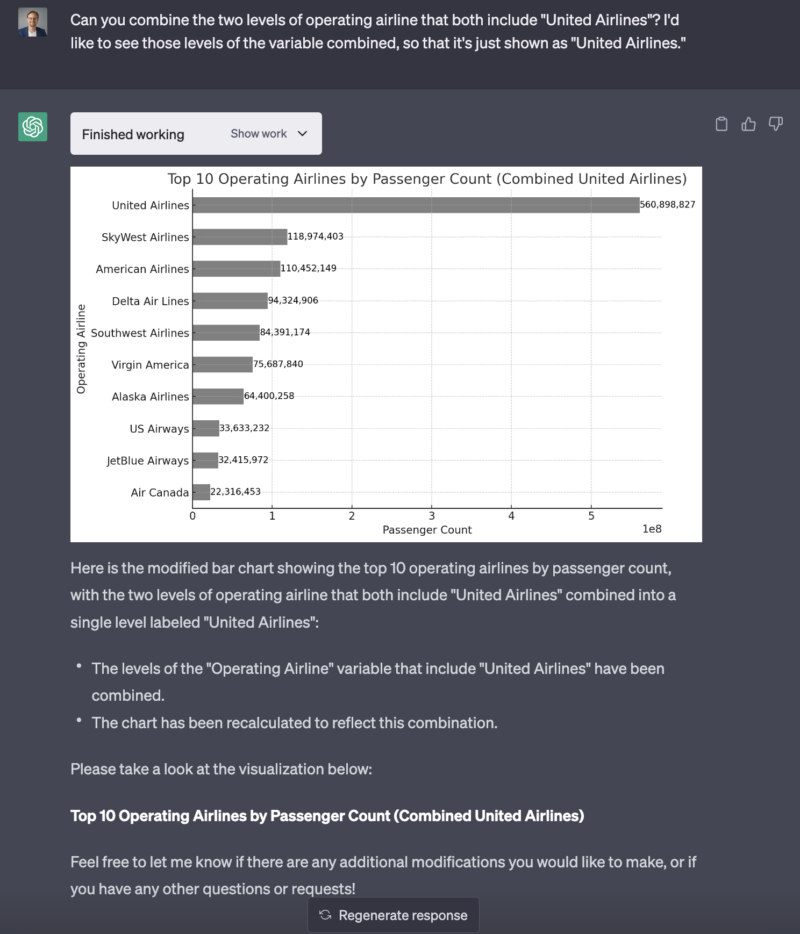

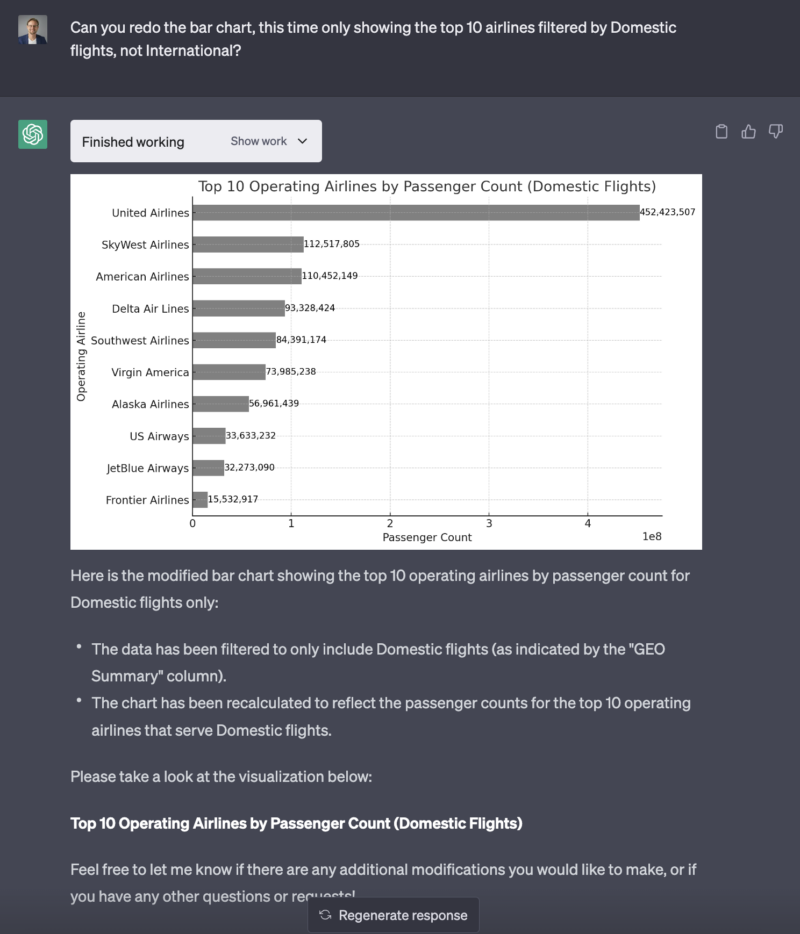

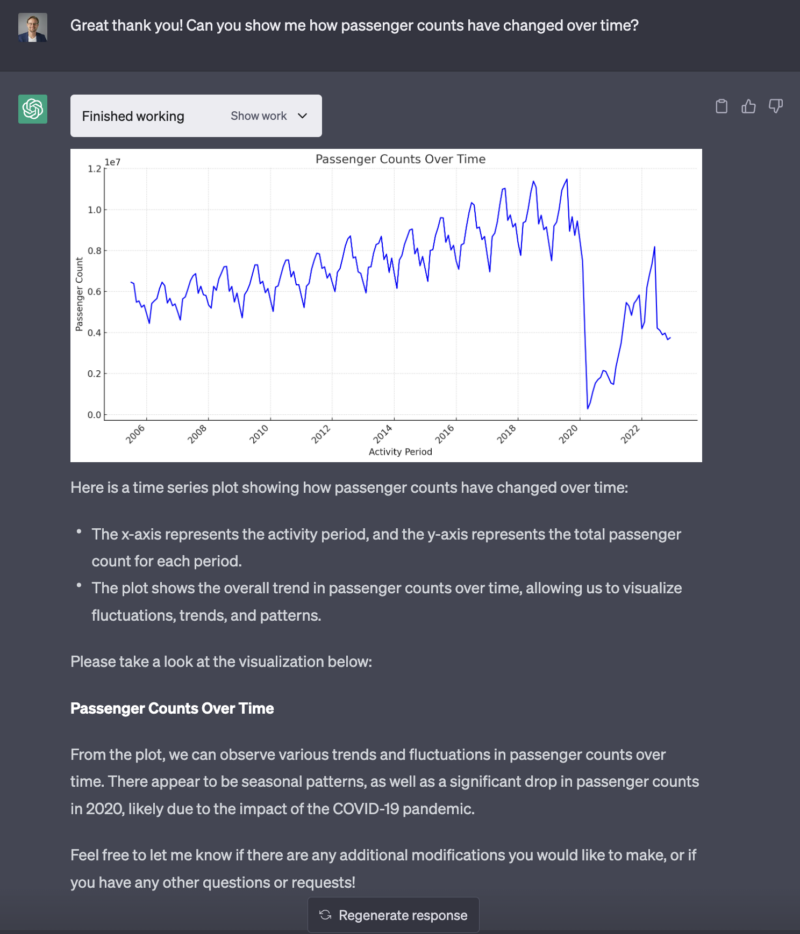

As shown in the video above and the screenshots and code below, I experimented with Code Interpreter to see what it could do when I uploaded this 50K row spreadsheet and began asking questions about the data, and asking it to analyze it and create charts for me. In a nutshell, I found it’s capabilities to be totally mind-blowing and groundbreaking. Never in my career have I been able to interact with a tool in such a conversational way to analyze and visualize data using code like this.

The kicker, to me, was when it reasoned what must’ve caused the drop in passenger counts in 2020. The data set itself includes nothing whatsoever about COVID-19. But ChatGPT with Code Interpreter knew that COVID-19 was the reason for the huge drop in passenger count that year. Of course, this event took place before September 2021, the upper time bound of its training data (largely internet sites). But one can imagine how OpenAI might combine the Code Interpreter plugin of ChatGPT with the internet browsing plugin to come up with plausible explanations for signals it finds in the data.

In this scenario, the data itself is fairly clean, and the analysis itself is fairly basic. But Code Interpreter took my questions about the data – both very broad questions and very narrow ones – and it successfully generated Python code that allowed me to learn a great deal about the data set in a short amount of time. And attempts to find inaccuracies by cross-checking the numbers using Tableau came up empty. Code Interpreter’s output was accurate.

This does not meant that it’s a perfect tool by any means, and fact checking will be critical for the foreseeable future. But it didn’t seem to exhibit the kinds of hallucinations seen in earlier attempts at data analysis using ChatGPT. LLMs may not be great at math, but it is great at language, and code is a form of language. So it can create code to analyze your data. Upload your data, submit questions in the form of prompts, and then watch it generate code that answers your questions.

Amazing, terrifying.

The following screen shots show my interaction with ChatGPT’s Code Interpreter plugin. The code snippets below the images show python code generated by Code Interpreter to answer my prompts shown in the images directly above the code snippets. This code is what you would see if you opened the “Show work” dropdown in each prompt.